Investor expectations are shifting fast—and by the 2026 proxy season, boards will be asked to prove they’re ready. That was the central message from a recent Corporate Board Member Briefing moderated by Dominique Shelton Leipzig, CEO of Global Data Innovation and one of the nation’s leading authorities on AI, privacy and data governance. With over 30 years in Big Law, she has trained 50,000+ professionals and advises Fortune 500 boards on responsible innovation. Joining her were Roosevelt Giles, a global expert in business transformation and cyber strategy who chairs the Stakeholder Impact Foundation and founded Endpoint Ventures, with investments across five continents, and Christine Heckart, veteran technology CEO and board director, founder and CEO of Xapa, an AI platform accelerating workforce transformation. Together, they made a clear case: Fiduciary duty now explicitly extends to AI oversight—and the era of “passive awareness” is over.

Why AI Is a Board-Level Fiduciary Issue

Giles framed AI as a systemic risk, not merely an operational one. Because AI can affect earnings, TSR, ROIC and cost structure, it falls squarely within the board’s duty to protect and grow long-term shareholder value. When novel technologies outpace legacy charters and bylaws, fiduciary duty becomes the “gap-filler,” he said—the standard by which courts and investors will judge whether boards asked the right questions and provided appropriate oversight.

Shelton Leipzig noted that BlackRock, Allianz, and proxy advisors like Glass Lewis have already updated stewardship guidelines. ISS is close behind. By the 2026 proxy season, they expect boards to demonstrate AI literacy and document director training and oversight frameworks in proxy statements. Fall short, and directors could face withhold recommendations, reputational damage—and, if governance lapses are material, Caremark-style derivative claims.

The TRUST Oversight Framework (What Boards Should Ask)

To help boards cut through sprawling, overlapping regulations (EU AI Act, NIST, ISO and dozens of new state laws), Shelton Leipzig presented the distilled TRUST framework—five practical pillars for board oversight:

- Triage. Ensure AI uses are mapped to corporate strategy. Identify where AI is deployed and which laws and risk tiers apply (prohibited, high or low). Many “shadow AI” projects fall outside enterprise priorities—triage surfaces and stops them.

- Right data to train—and rights to train. Boards should confirm that training data is accurate, lawfully sourced, with IP and privacy rights. Poor data hygiene can derail programs and create liability.

- Uninterrupted testing, monitoring, and auditing. Bake accuracy thresholds, escalation paths and human safeguards directly into systems—don’t rely on policies sitting on a shelf. If you’d never allow a customer-service rep to curse at a customer, your chatbot shouldn’t either. Code standards of conduct into AI and monitor for drift.

- Supervising humans. Culture is the bedrock. Train employees to recognize when AI outputs deviate from policy, or quality—and to “see something, say something.” Front-line awareness often catches defects first; reward it.

- Technical documentation. Maintain artifacts to diagnose and correct model drift. Hallucinations are inevitable; what matters is monitoring, detection and remediation.

From Compliance Governance to Data Governance

Most boards still route technology oversight to Audit. Giles cautioned that adding AI and cyber to an already oversubscribed audit agenda risks process failure and greater Caremark exposure. His recommendation: create a Technology or Data & Technology Committee to unify oversight of AI, cyber, data governance and digital transformation—and report to the full board.

Where refreshment is slow or skill gaps exist, Giles advises adding non-voting advisory directors for 24-month rotations. This quickly injects expertise, builds institutional knowledge and creates a pipeline for future fiduciary directors.

Readiness Is Mostly About People, Not Tools

Heckart emphasized that successful AI programs depend more on change management than algorithms. As a CEO and a public board member, Heckart explained that her team reviews AI applications and controls quarterly with Global Data Innovation against the TRUST framework, paired with enterprise-wide training and AI “coaches” that guide employees on responsible use.

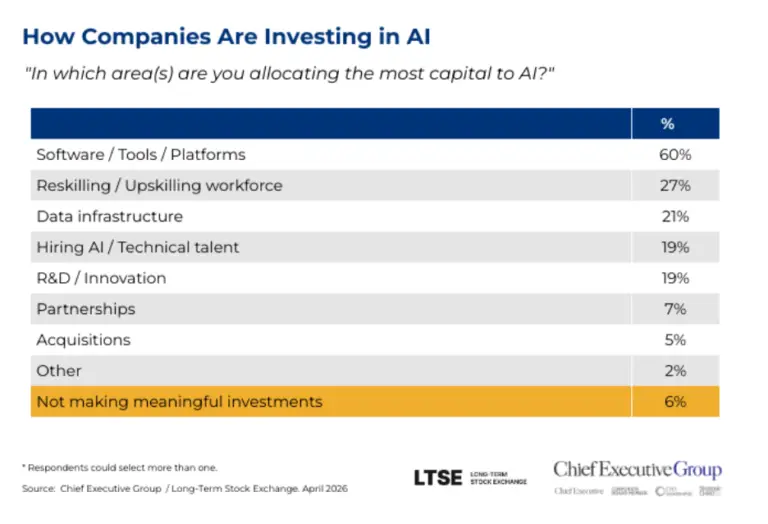

Speakers cited published research indicating that:

- Most AI failures are people/process failures, not technology defects.

- Companies over-invest in tools and under-invest in training, leaving large productivity and risk-reduction gains on the table.

- Organizations that start with human-AI collaboration (vs. pure replacement) see stronger performance improvements.

Heckart’s punchline: treat every employee as a manager of AI. With generative tools embedded in productivity suites, even early-career staff are now supervising “digital interns.” That requires judgment training—context setting, quality checks, escalation—well beyond technical upskilling.

Activists, Index Funds, and the New Scorecard

Expect activist investors to mine disclosures, talk to customers and suppliers and benchmark director track records. If AI spending is high and returns are vague—or risk controls look performative—boards will be challenged on strategy, skills and speed. Activists coordinate with large index funds and proxy advisors; tone and substance in a board’s response matter. The questions they’re asking mirror the TRUST pillars and basic capital discipline: Where’s the ROI? Where’s the risk control? Who on the board actually understands this?

Five Questions Directors Can Ask This Quarter

- Triage: Which AI initiatives directly advance our top strategic priorities—and which fall outside our mission or risk appetite?

- Right data: Are we confident our training data is accurate, ethically sourced and covered by the proper IP, privacy and business rights?

- Uninterrupted monitoring: How are we testing and auditing models for accuracy, bias and drift—and how quickly can we detect and correct errors?

- Supervision: Are clear escalation paths in place when AI outputs deviate from policy or ethics—and who is accountable for intervention?

- Technical documentation: Can we produce the artifacts proving that oversight, monitoring and remediation occurred when issues arose?

The Imperative: Move Fast—With Guardrails

Across the hour, one theme kept returning: The bigger risk for incumbents is moving too slowly. AI is reshaping cost curves, customer experience and business models. Boards must experiment boldly yet govern responsibly—with visible director literacy, an actionable framework, the right committee structure and people-first readiness that turns pilots into performance.

Done well, AI becomes part of the company’s operating system—aligned to strategy, measured for outcomes, monitored for risk and powered by a capable workforce. That’s not just governance; it’s durable advantage.